Reproducible and Accountable Systems (RAS)

Improving data-intensive, distributed, and parallel science workflows with reproducible and accountable containers.

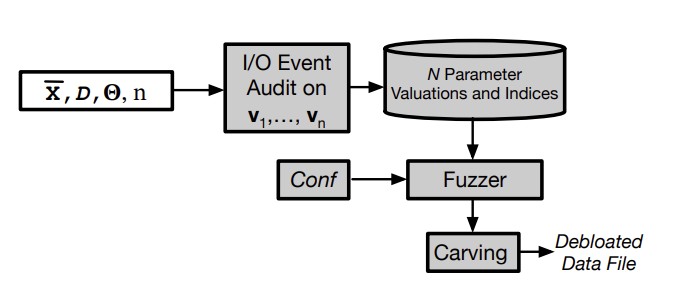

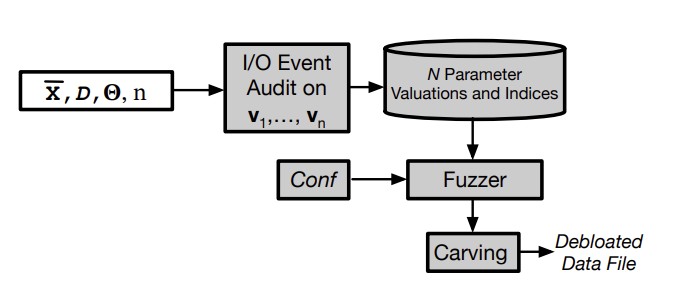

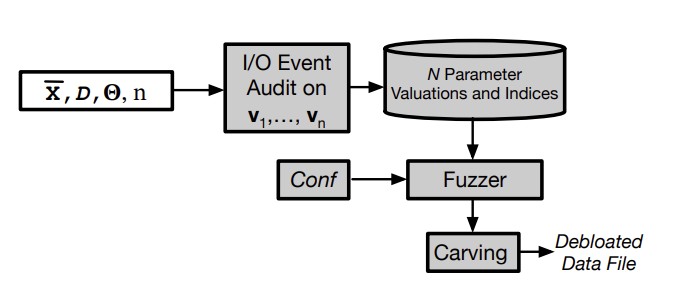

Isolation increases upfront costs of provisioning containers. This is due to unnecessary software and data in container images. While several static and dynamic analysis methods for pruning unnecessary software are known, less attention has been paid to pruning unnecessary data. In this paper, we address the problem of determining and reducing unused data within a containerized application. Current data lineage methods can be used to detect data files that are never accessed in any of the observed runs, but this leads to a pessimistic amount of debloating. It is our observation that while an application may access a data file, it often accesses only a small portion of it over all its runs. Based on this observation, we present an approach and a tool Kondo, which aims to identify the set of all possible offsets that could be accessed within the data files over all executions of the application. Kondo works by fuzzing the parameter inputs to the application, and running it on the fuzzed inputs, with vastly fewer runs than brute force execution over all possible parameter valuations. Our evaluation on realistic benchmarks shows that Kondo is able to achieve 63% reduction in data file sizes and 98% recall against the set of all required offsets, on average.

Tikmany, R., Modi, A., Atiq, R., Reyad, M., Gehani, A., and Malik, T.

IEEE Cluster, Cloud and Internet Computing (CCGrid)

2024

Kondo: Efficient Provenance-driven Data Debloating

Isolation increases upfront costs of provisioning containers. This is due to unnecessary software and data in container images. While several static and dynamic analysis methods for pruning unnecessary software are known, less attention has been paid to pruning unnecessary data. In this paper, we address the problem of determining and reducing unused data within a containerized application. Current data lineage methods can be used to detect data files that are never accessed in any of the observed runs, but this leads to a pessimistic amount of debloating. It is our observation that while an application may access a data file, it often accesses only a small portion of it over all its runs. Based on this observation, we present an approach and a tool Kondo, which aims to identify the set of all possible offsets that could be accessed within the data files over all executions of the application. Kondo works by fuzzing the parameter inputs to the application, and running it on the fuzzed inputs, with vastly fewer runs than brute force execution over all possible parameter valuations. Our evaluation on realistic benchmarks shows that Kondo is able to achieve 63% reduction in data file sizes and 98% recall against the set of all required offsets, on average.

Modi, A., Tikmany, R., Malik, T., Komondoor,R., Gehani, A. and D'Souza, D.

40th IEEE International Conference on Data Engineering (ICDE)

2024

Transparent and Explainable AI (TEAI)

Advancing trustworthy and transparent machine learning by designing explainable models, interpretable outputs, and human-centered evaluation frameworks.

Accurate Differential Analysis using Record and Selective Replay

This is a novel method for debugging and verifying the reproducibility of parallel MPI applications when both inputs and message exchange orders change. Traditional record-and-replay tools often produce numerous false positives because they cannot distinguish between execution divergences caused by intentional input changes and those caused by non-deterministic network timing. To solve this, the authors propose selective replay, a technique that replays recorded message orders only when the current execution path aligns with the original run. By unrolling control-flow graphs to uniquely identify execution locations, the proposed system can precisely pinpoint where code logic diverges and converges. Experimental results on complex scientific libraries demonstrate that this approach reduces false positives by over 50% compared to existing methods, providing developers with significantly clearer insights into how specific input modifications affect large-scale parallel software.

Nakamura, Y., Chu, X., Laguna, I., and Malik, T.

37th International Conference on Scalable Scientific Data Management

2025

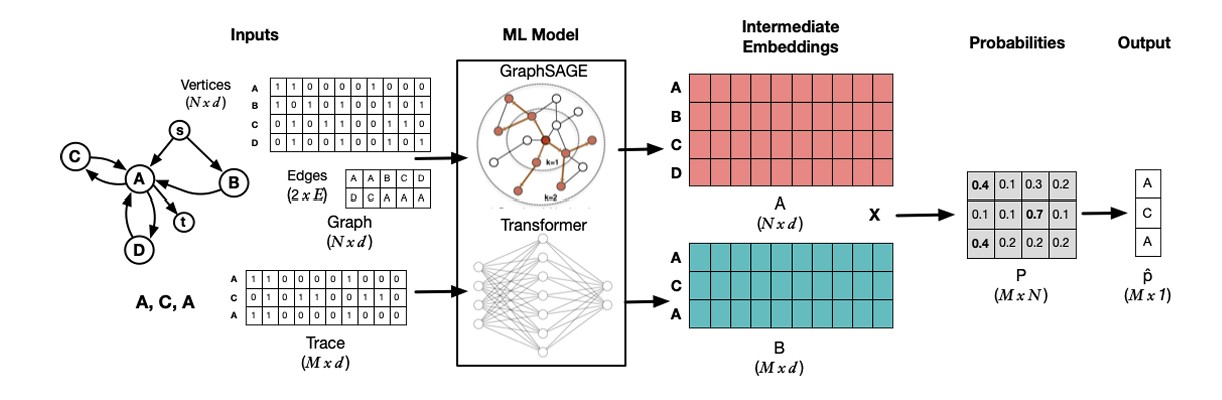

Accurate Path Prediction of Provenance Traces

Several security and workflow applications require provenance information at the operating system level for diagnostics. The resulting provenance traces are often more informative if they are efficiently mapped to execution paths within the control flow graph. However, current provenance systems do not map traces to control flow graphs for diagnostics purposes due to the computational complexity of mapping traces to graphs. We formulate the path prediction problem for provenance traces and take a machine learning approach to solve the problem. We develop a transformer-based graph convolutional network to predict paths. Our experiments demonstrate that our machine learning model achieves more than twice the accuracy on average compared to simple probabilistic models, with an increased computation time trade-off.

Ahmad, R., Jung, H. Y., Nakamura, Y., and Malik, T.

33rd ACM International Conference on Information and Knowledge Management (CIKM)

2024

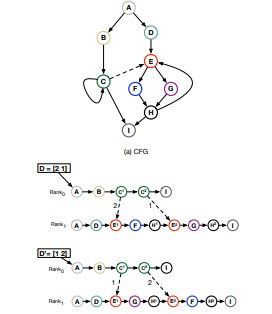

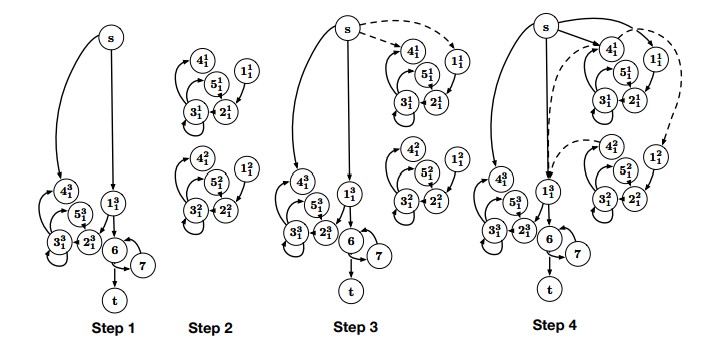

Efficient Differencing of System-level Provenance Graphs

Data provenance, when audited at the operating system level, generates a large volume of low-level events. Current provenance systems infer causal flow from these event traces, but do not infer application structure, such as loops and branches. The absence of these inferred structures decreases accuracy when comparing two event traces, leading to low-quality answers from a provenance system. In this paper, we infer nested natural and unnatural loop structures over a collection of provenance event traces. We describe an `unrolling method' that uses the inferred nested loop structure to systematically mark loop iterations, i.e., start and end, and thus to easily compare two event traces audited for the same application. Our loop-based unrolling improves the accuracy of trace comparison by 20-70% over trace comparisons that do not rely on inferred structures.

Nakamura, Y, Kanj, I and Malik, T

32nd ACM International Conference on Information and Knowledge Management (CIKM)

2023

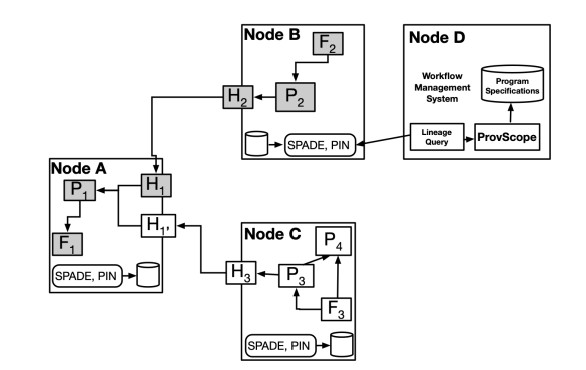

Provenance-based Workflow Diagnostics Using Program Specification

Workflow management systems (WMS) help automate and coordinate scientific modules and monitor their execution. WMSes are also used to repeat a workflow application with different inputs to test sensitivity and reproducibility of runs. However, when differences arise in outputs across runs, current WMSes do not audit sufficient provenance metadata to determine where the execution first differed. This increases diagnostic time and leads to poor quality diagnostic results. In this paper, we use program specification to precisely determine locations where workflow execution differs. We use existing provenance audited to isolate modules where execution differs. We show that using program specification comes at some increased storage overhead due to mapping of provenance data flows onto program specification, but leads to better quality diagnostics in terms of the number of differences found and their location relative to comparing provenance metadata audited within current WMSes.

Nakamura, Y. Malik, T. Kanj, I. Gehani, A.

29th IEEE International Conference on High Performance Computing, Data, and Analytics

2022

Big Data Management (BDM)

Optimizing scientific data for volume, velocity, and variety via indexing, streaming, and semantic dataspaces.

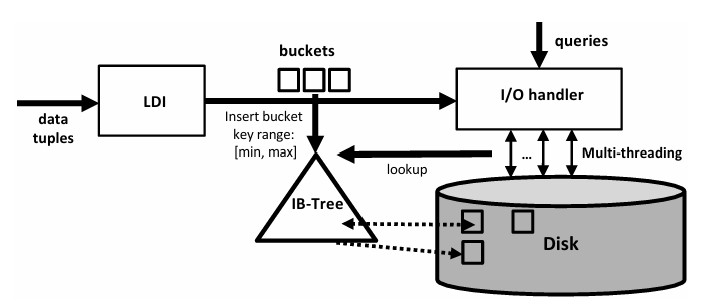

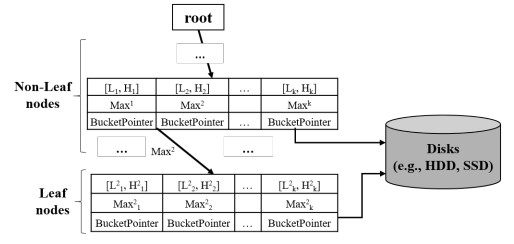

LDI: Learned Distribution Index for Column Stores

In column stores, which ingest large amounts of data into multiple column groups, query performance deteriorates. Commercial column stores use log-structured merge (LSM) tree on projections to ingest data rapidly. LSM improves ingestion performance, but in column stores the sort-merge phase is I/O-intensive, which slows concurrent queries and reduces overall throughput. In this paper, we aim to reduce the sorting and merging cost that arise when data is ingested in column stores. We present LDI, a learned distribution index for column stores. LDI learns a frequency-based data distribution and constructs a bucket worth of data based on the learned distribution. Filled buckets that conform to the distribution are written out to disk; unfilled buckets are retained to achieve the desired level of sortedness, thus avoiding the expensive sort-merge phase. We present an algorithm to learn and adapt to distributions, and a …

That, D. T. Gharehdaghi, M. Rasin, A. Malik, T.

2021 IEEE International Conference on Big Data (Big Data)

2021

On Lowering Merge Costs of an LSM Tree

In column stores, which ingest large amounts of data into multiple column groups, query performance deteriorates. Commercial column stores use log-structured merge (LSM) tree on projections to ingest data rapidly. LSM tree improves ingestion performance, but for column stores the sort-merge maintenance phase in an LSM tree is I/O-intensive, which slows concurrent queries and reduces overall throughput. In this paper, we present a simple heuristic approach to reduce the sorting and merging cost that arise when data is ingested in column stores. We demonstrate how a Min-Max heuristic can construct buckets and identify the level of sortedness in each range of data. Filled and relatively-sorted buckets are written out to disk; unfilled buckets are retained to achieve a better level of sortedness, thus avoiding the expensive sort-merge phase. We compare our Min-Max approach with LSM tree and production columnar stores using real and synthetic datasets

Ton That, D. H., Gharehdaghi, M., Rasin, A., and Malik, T.

33rd International Conference on Scientific and Statistical Database Management (SSDBM)

2021

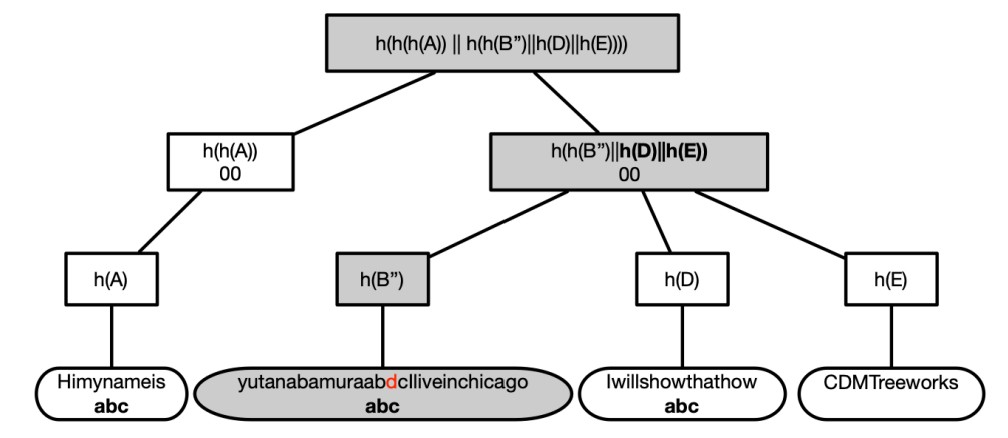

Content-defined Merkle Trees for Efficient Container Delivery

Containerization simplifies the sharing and deployment of applications when environments change in the software delivery chain. To deploy an application, container delivery methods push and pull container images. These methods operate on file and layer (set of files) granularity, and introduce redundant data within a container. Several container operations such as upgrading, installing, and maintaining become inefficient, because of copying and provisioning of redundant data. In this paper, we reestablish recent results that block-level deduplication reduces the size of individual containers, by verifying the result using content-defined chunking. Block-level deduplication, however, does not improve the efficiency of push/pull operations which must determine the specific blocks to transfer. We introduce a content-defined Merkle Tree (CDMT) over deduplicated storage in a container. CDMT indexes deduplicated …

Nakamura, Y. Ahmad, R. Malik, T.

28th IEEE International Conference on High Performance Computing, Data, & Analytics

2020

PLI+: Efficient Clustering of Cloud Databases

Commercial cloud database services increase availability of data and provide reliable access to data. Routine database maintenance tasks such as clustering, however, increase the costs of hosting data on commercial cloud instances. Clustering causes an I/O burst; clustering in one-shot depletes I/O credit accumulated by an instance and increases the cost of hosting data. An unclustered database decreases query performance by scanning large amounts of data, gradually depleting I/O credits. In this paper, we introduce Physical Location Index Plus (PLI^+ PLI+), an indexing method for databases hosted on commercial cloud. PLI^+ PLI+ relies on internal knowledge of data layout, building a physical location index, which maps a range of physical co-locations with a range of attribute values to create approximately sorted buckets. As new data is inserted, writes are partitioned in memory based on incoming data …

That, D. H. T. Wagner, J. Rasin, A. Malik, T.

Distributed and Parallel Databases

2019

Scalable Cyberinfrastructure (SC)

Enabling scientific research and innovation at scale by supporting advanced research through distributed, collaborative, and data-intensive capabilities.

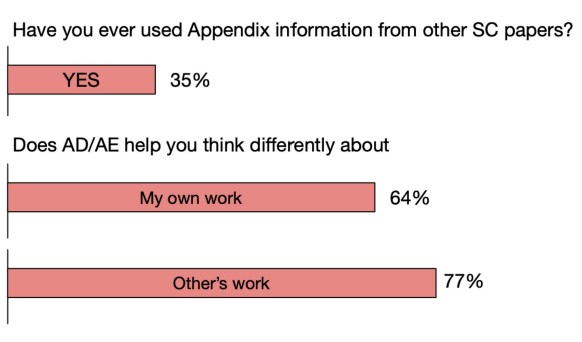

Reproducibility Practice in High Performance Computing: Community Survey Results

The integrity of science and engineering research is grounded in assumptions of rigor and transparency on the part of those engaging in such research. HPC community effort to strengthen rigor and transparency take the form of reproducibility efforts. In a recent survey of the SC conference community, we collected information about the SC reproducibility initiative activities. We present the survey results in this article. Results show that the reproducibility initiative activities have contributed to higher levels of awareness on the part of SC conference technical program participants, and hint at contributing to greater scientific impact for the published papers of the SC conference series. Stringent point-of-manuscript-submission verification is problematic for reasons we point out, as are inherent difficulties of computational reproducibility in HPC. Future efforts should better decouple the community educational goals from goals that specifically strengthen a research work’s potential for long-term impact through reuse 5–10 years down the road.

Plale, B., Malik, T., and Pouchard, L.

IEEE Computing in Science and Engineering

2021

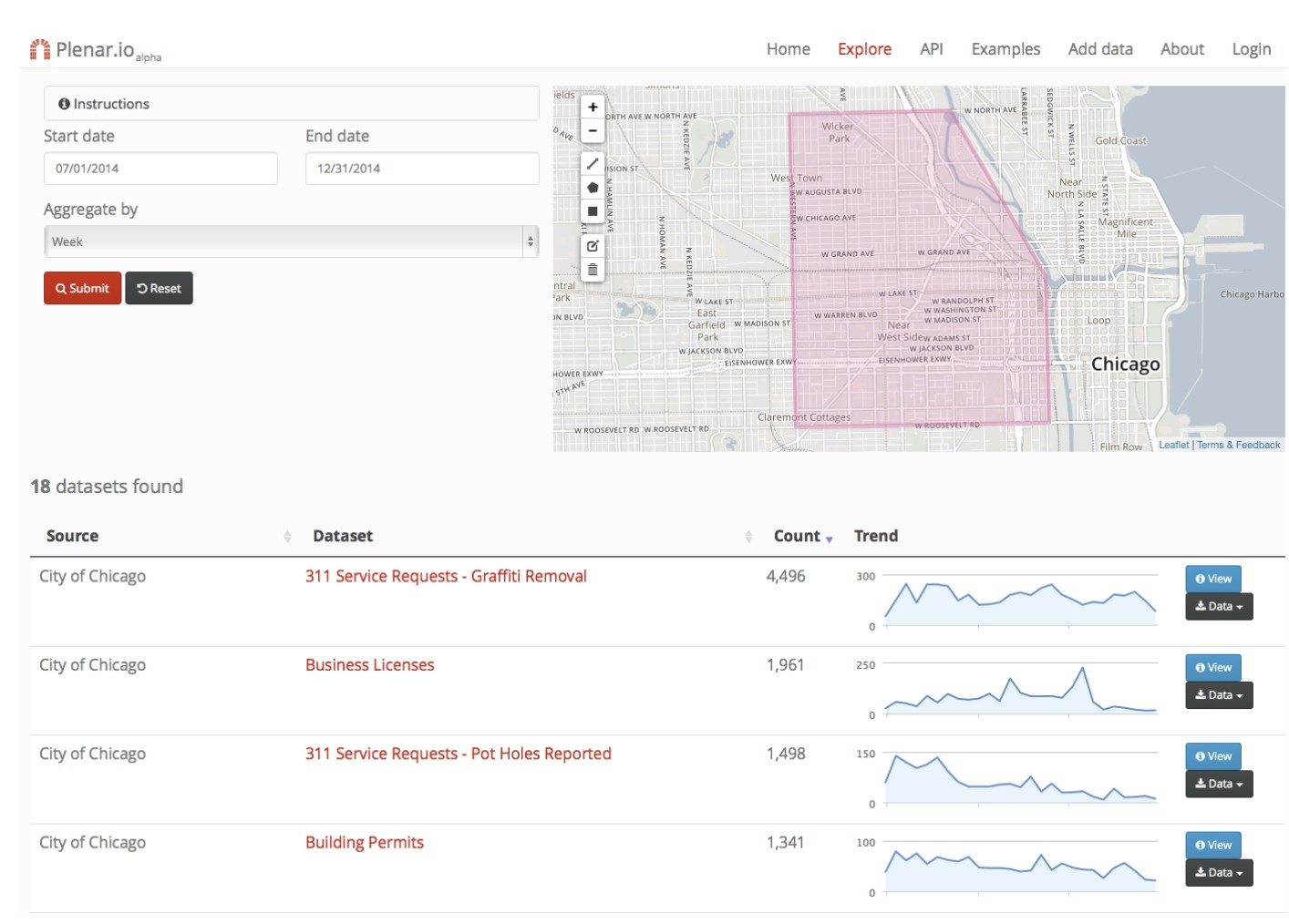

Plenario: An Open Data Discovery and Exploration Platform for Urban Science

The past decade has seen the widespread release of open data concerning city services, conditions, and activities by government bodies and public institutions of all sizes. Hundreds of open data portals now host thousands of datasets of many different types. These new data sources represent enormous po tential for improved understanding of urban dynamics and processes—and, ultimately, for more livable, efficient, and prosperous communities. However, those who seek to realize this potential quickly discover that discovering and applying those data relevant to any particular question can be extraordinarily dif ficult, due to decentralized storage, heterogeneous formats, and poor documentation. In this context, we introduce Plenario, a platform designed to automating time-consuming tasks associated with the discovery, exploration, and application of open city data—and, in so doing, reduce barriers to data use for researchers, policymakers, service providers, journalists, and members of the general public. Key innovations include a geospatial data warehouse that allows data from many sources to be registered into a common spatial and temporal frame; simple and intuitive interfaces that permit rapid discovery and exploration of data subsets pertaining to a particular area and time, regardless of type and source; easy export of such data subsets for further analysis; a user-configurable data ingest framework for automated importing and periodic updating of new datasets into the data warehouse; cloud hosting for elastic scaling and rapid creation of new Plenario instances; and an open source implementation to enable community contributions. We describe here the architecture and implementation of the Plenario platform, discuss lessons learned from its use by several communities, and outline plans for future work.

Catlett, C., Malik, T., Goldstein, B., Giuffrida, J., Shao, Y., Panella, A., Eder, D., van Zanten, E., Mitchum, R., Thaler, S., and Foster, I.

IEEE Data Engineering Bulletin

2014

Community and Policy (CP)

Enhancing scientific innovation through scalable infrastructure, robust data governance, and ethical policy frameworks that support open, secure, and FAIR research practices.

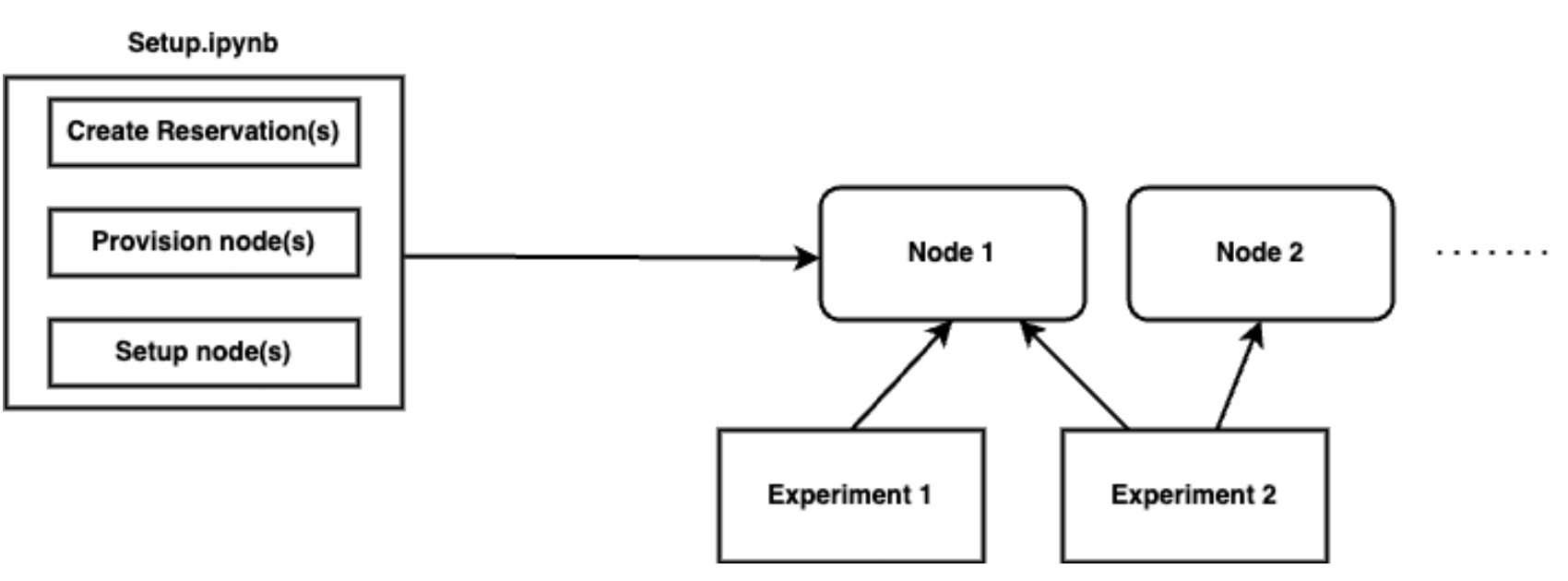

FAIR Assessment of Cloud-based Experiments

Several computer science experiments require cloud infrastructure to produce results. Federally-funded cloud testbeds such as Chameleon and CloudLab aim to meet this need. A direct benefit of large-scale experimentation on these federally-funded cloud testbeds is the ease of reproducing experiments on the same hardware configuration originally used by an author. In this paper, we analyze over 100 shareable computer science experiments available on Chameleon, classifying them into different types: tutorials, research experiments, bug reproduction, and course assignments. We determine the packaging requirements for these various types of experiments and assess whether the resulting packages are repeatable on Chameleon and reusable on other public cloud infrastructures like AWS. Our findings reveal that several available experiments are contingent on obtaining leases, which result in significant lag time, thus affecting their ‘push-button’ reproducibility. Additionally, we find that packaging systems often overlook experimental files and include hardware configuration APIs that complicate reproducing these experiments on other public cloud infrastructures. Based on these findings, we offer recommendations for creating reusable packages.

Kamath, K., Brewer, N., and Malik, T.

4th Workshop on Reproducible Workflows, Data Management, and Security (ReWoRDS)

2024

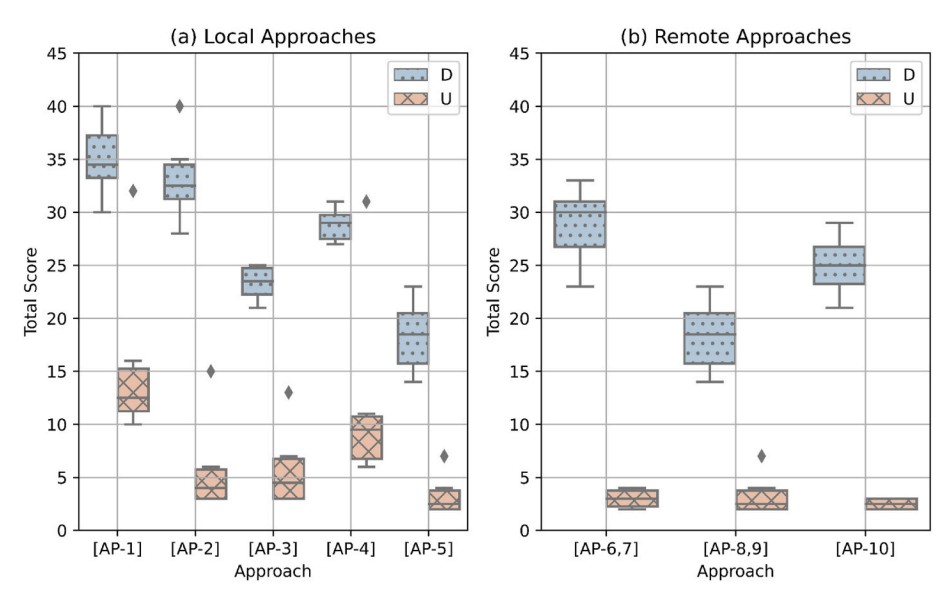

Comparing containerization-based approaches for reproducible computational modeling of environmental systems

Creating online data repositories that follow Findable, Accessible, Interoperable, and Reusable (FAIR) principles has been a significant focus in the research community to address the reproducibility crisis facing many computational fields, including environmental modeling. However, less work has focused on another reproducibility challenge: capturing modeling software and computational environments needed to reproduce complex modeling workflows. Containerization technology offers an opportunity to address this need, and there are a growing number of strategies being put forth that leverage containerization to improve the reproducibility of environmental modeling. This research compares ten such approaches using a hydrologic model application as a case study. For each approach, we use both quantitative and qualitative metrics for comparing the different strategies. Based on the results, we discuss challenges and opportunities for containerization in environmental modeling and recommend best practices across both research and educational use cases for when and how to apply the different containerization-based strategies

Choi, Y., Roy, B., Nguyen, J., Ahmad, R., Maghami, I., Nassar, A., Li, Z., Castronova, A., Malik, T., Wang, S., and Goodall, J.

Environmental Modelling & Software

2023

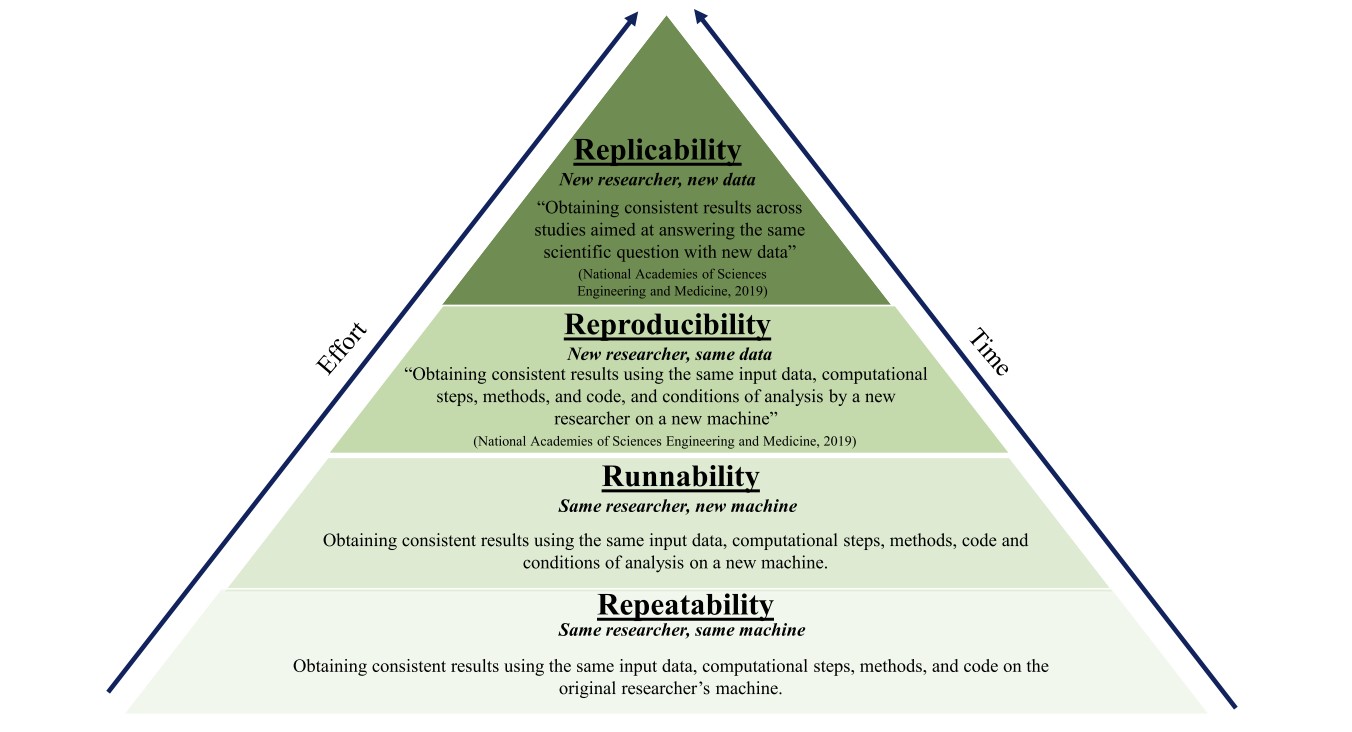

A taxonomy for reproducible and replicable research in environmental modelling

Despite the growing acknowledgment of reproducibility crisis in computational science, there is still a lack of clarity around what exactly constitutes a reproducible or replicable study in many computational fields, including environmental modelling. To this end, we put forth a taxonomy that defines an environmental modelling study as being either 1) repeatable, 2) runnable, 3) reproducible, or 4) replicable. We introduce these terms with illustrative examples from hydrology using a hydrologic modelling framework along with cyberinfrastructure aimed at fostering reproducibility. Using this taxonomy as a guide, we argue that containerization is an important but lacking component needed to achieve the goal of computational reproducibility in hydrology and environmental modelling. Examples from hydrology are provided to demonstrate how new tools, including a user-friendly tool for containerization of computational …

Essawy, B. T. Goodall, J. L. Voce, D. Morsy, M. M. Sadler, J. M. Choi, Y. D. Tarboton, D. G. Malik, T.

Environmental Modelling & Software

2020